SK hynix unveils HBM3E, supplies sample to Nvidia

World's 2nd-largest memory chipmaker seeks to cement leadership in burgeoning HBM market

By Jo He-rimPublished : Aug. 21, 2023 - 15:33

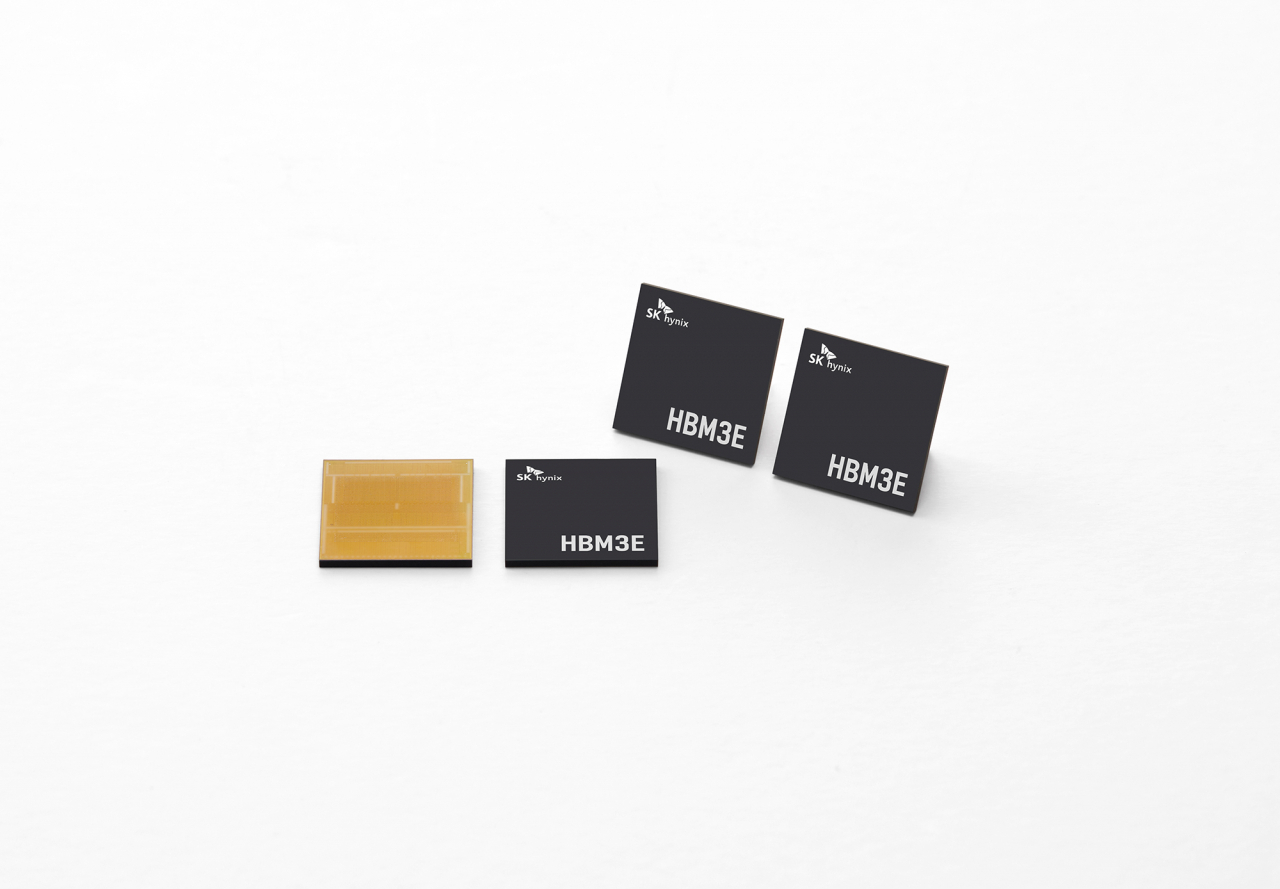

South Korean chipmaker SK hynix announced Monday it has successfully developed HBM3E, the next generation top performing DRAM chip for artificial intelligence applications. The chipmaker also said its customer is evaluating the sample of the new product.

High Bandwidth Memory is a high-value, high-performance memory that vertically interconnects multiple DRAM chips, enabling a dramatic increase in data processing speed in comparison to earlier DRAM products. HBM3E is the extended version of the HBM3 and the 5th generation of its kind, succeeding the previous generations of HBM, HBM2, HBM2E and HBM3.

The world’s second-largest memory chip maker said the latest development of HBM3E, which is the extended version of HBM3, comes on top of its experience as the industry’s sole mass provider of HBM3.

“The company, through the development of HBM3E, has strengthened its market leadership by further enhancing the completeness of the HBM product lineup, which is in the spotlight amid the development of AI technology,” said Ryu Sung-soo, head of DRAM product planning at SK hynix.

“By increasing the supply share of high-value HBM products, SK hynix will also seek a fast business turnaround.”

The chipmaker said it plans to mass-produce HBM3E from the first half of next year, to cement unrivaled leadership in AI memory market. Nvidia, a prominent GPU manufacturer, is currently evaluating the sample of SK's new product.

“We have a long history of working with SK hynix on High Bandwidth Memory for leading edge accelerated computing solutions,” said Ian Buck, vice president of hyperscale and HPC computing at Nvidia.

“We look forward to continuing our collaboration with HBM3E to deliver the next generation of AI computing.”

According to the company, the latest product not only meets the industry’s highest standards of speed -- the key specification for AI memory products -- but also all categories including capacity, heat dissipation and user-friendliness.

SK hynix said HBM3E can process data up to 1.15 terabytes a second, which is equivalent to processing more than 230 full-HD movies of 5 GB each in a second.

The product also comes with a 10 percent improvement in heat dissipation by adopting the cutting-edge technology of the Advanced Mass Reflow Molded Underfill, or MR-MUF2, onto the latest product, the company said. It also provides backward compatibility that enables the adoption of the latest product, even onto systems that have been prepared for the HBM3 without a design or structure modification, SK hynix added.

While HBM3 is the most up-to-date version of the chip in the market, the HBM3e memory that would be used in Nvidia’s newest AI chip is 50 percent faster, Nvidia said. HBM3e delivers a total of 10 terabytes per second of combined bandwidth, allowing the new platform to run models 3.5 times larger than the previous version, while improving performance with three times faster memory bandwidth, Nvidia said.

SK hynix has been considered the front-runner in the HBM market, taking up almost 50 percent of the market share as of 2022, according to data released by TrendForce. For this year, the market tracker expects both Samsung Electronics and SK hynix to each take 46 to 49 percent of the market share, and Micron Technology to take 4 to 6 percent.

![[Graphic News] Number of coffee franchises in S. Korea rises 13%](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=644&simg=/content/image/2024/05/02/20240502050817_0.gif&u=)

![[Robert J. Fouser] AI changes rationale for learning languages](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=644&simg=/content/image/2024/05/02/20240502050811_0.jpg&u=)

![[Eye Interview] 'If you live to 100, you might as well be happy,' says 88-year-old bestselling essayist](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=652&simg=/content/image/2024/05/03/20240503050674_0.jpg&u=)